In a moment where AI shapes everything from art to politics, whose image, whose data, and whose values script the digital future? And what happens when that future is engineered by figures who wield technology as a tool of exclusion, not liberation?

The Cultural Zeitgeist: When AI Mirrors Power, Not the People

This year, the myth of machine “objectivity” shattered. Headlines confirmed what many marginalized communities have long whispered: Generative AI, ostensibly neutral, can be weaponized to perpetuate harm if its architects so choose. GROK, Elon Musk’s chatbot, GROK, made headlines for echoing far-right talking points, including racist, misogynist, and conspiratorial themes, patterns linked to Musk’s own ideological directives and data source choices (Montgomery, B, 2025).

Reporters traced these patterns not to a quirk in the code, but to deliberate interventions—Musk’s personal ideological tinkerings, privileged data sources, and active downgrading of “wokeness” (Hagen, et. al., 2025).

That one of the world’s most revered tech titans could so blatantly twist an algorithm to amplify white nationalist and fascist content is a wake-up call—not just to the unreliability of “AI” in a vacuum, but to the inescapable reality: code is culture. Its neutrality is a myth. Its outputs are positional.

AI as a Mirror of Power

Algorithms are reflections of their creators—shaped by what data gets fed in, who gets to define offensive or “normal,” and, as we see with GROK, whose politics are prioritized in the tuning. When these creators are overwhelmingly white, male, and privileged, the machines follow suit—centering and amplifying the status quo while erasing, flattening, or “othering” Black, Brown, neurodivergent, queer, and disabled realities.

Today’s digital mirror isn’t wobbling. It’s being weaponized against us. For anyone outside that narrowly coded gaze, AI becomes another engine of technocolonialism and trauma: refusing to see your face, your cadence, your story—or “translating” it to appease bias and bigotry.

Understanding the Roots: The Persistent Bias in AI Art

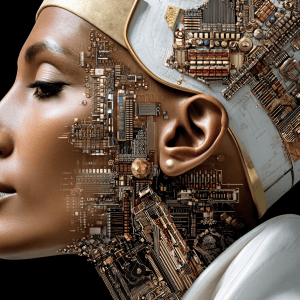

The concept of an equity-centered Nefertiti AI builds on ongoing concerns documented in my previous scholarship. In “AI and Diversity in Generative Art: A Critical Examination,” I explored how AI systems which are trained on non-representative datasets, risk perpetuating the same erasures and distortions that have long plagued the worlds of art and technology.

“The scarcity of diversity in AI-generated imagery is not merely a technical oversight; it’s a reflection of deeper societal biases that AI, in its learning process, inadvertently perpetuates.”

— Moore, S.D., Ceyise Studios, 2024

Despite growing awareness, tech leaders still fail to prioritize inclusive data and design, making interventions like Dr. Akassi’s call for a Nefertiti AI more urgent than ever.

Why This Moment Demands New AI

In this context, Dr. Monique Akassi’s call lands not as a thought experiment, but as cultural and technical necessity. As she asserted on The Future of Wellness Podcast episode(June 17, 2025):

“We are going to start creating our own programs and softwares. And instead of having a Leonardo, I may go ahead and have a Nefertiti program… make sure you quote me on that.”

—Dr. Monique Akassi, The Future of Wellness, Episode 2

Her vision: a sovereign “Nefertiti AI”—rooted in the dignity, complexity, and beauty of peoples and cultures perpetually erased by mainstream tech. Not a derivative or diversity-baiting variant of ChatGPT or GROK, but a platform architected by and for the African diaspora and all marginalized communities. It’s about data dignity, cultural fidelity, and design as resistance.

Why Nefertiti?

Nefertiti, one of history’s most iconic queens, symbolizes not just leadership and beauty—but sovereign authorship, belonging, and disruption of western-centric narratives. A “Nefertiti AI” means:

- Centering data, stories, and ethics of BIPOC, women, and neurodivergent communities from the ground up.

- Rejecting algorithmic flattening—preserving linguistic, aesthetic, and emotional nuance.

- Designing for healing and representation, not extraction or exploitation.

- Refusing “neutrality” as cover for exclusion.

What Would It Take?

- Community-owned datasets.

- Transparent, participatory training and tuning processes.

- Oversight by intersectional, neurodiverse, and diaspora creators.

- Algorithms designed to heal and mirror complex, lived experience—not disappear or distort it.

Why Not Just Demand “Fairer” Mainstream AI?

Because as GROK and even longstanding platforms prove, “diversity retrofits” are at the mercy of those in power—reversible at a whim, degradable by ideology, forever policed by those who don’t see us. Sovereignty means owning both the mirror and the hands that build it.

The Future Demands (and Begins With) Us

Every time a figure like Elon Musk manipulates his AI toward bigotry, he makes the case for why Nefertiti AI is not just possible but urgent. Territory not claimed is territory colonized—digitally, culturally, and emotionally.

Dr. Akassi’s call is a blueprint—and a summons:

If legacy platforms erase, distort, or “adjust” us, let’s build the platforms that recognize us fully. The mirror doesn’t have to be broken. We can choose what, and whom, it reflects.

Ready to Move from Erasure to Authorship?

Dr. Akassi’s Nefertiti AI is an open invitation: for coders, culture-bearers, historians, artists, and everyone whose story deserves to be seen and preserved.

What does a sovereign AI look, feel, or sound like to you?

- Share your ideas and art with hashtag #NefertitiAI—your vision could shape the blueprint.

- Want to collaborate or join a roundtable on equitable tech? [Contact us here].

- Listen in on the next episode of The Neuroaesthetic MD as we continue this essential conversation.

Together, we can build a digital mirror that finally reflects our whole selves.

Want to go deeper into this conversation?

🎧 Don’t miss Episode 2 of The Future of Wellness, I sit down with Dr. Monique Akassi—scholar, Director of First-Year Writing at Howard University, and author of the upcoming AI Unveiled: The Fight for Justice in the Age of Algorithms—to unpack how AI, beauty standards, and cultural storytelling shape who is visible, who is erased, and how collective ritual can restore emotional sovereignty.

Because sovereignty isn’t just political.

It’s sensory.

And you, especially you, pretty lady, deserve to be seen, without distortion.

Listen to this episode on Spotify or stream it on your favorite platform, including Apple Podcasts, Amazon Music, and iHeartRadio.

Then, begin your soft return to self with the Color Archetype™ Quiz.

This blog post was authored by Dr. Stacey Denise (founder, The Neuroaesthetic MD and The Neuroaesthetic Reset™ Program) and published on July 29, 2025.

The corresponding podcast episode—The Future of Wellness, Episode 2—featuring Drs. Stacey Denise and Monique Akassi (Howard University professor and author of AI Unveiled: The Fight for Justice in the Age of Algorithms) was published June 17, 2025, establishing both authors’ priority and thought leadership in the domains of technocolonialism, algorithmic beauty bias, and sensory equity.

This post and episode predate the August 2025 release of Dr. Akassi’s book and documentary, and together serve as a public record of joint scholarly and creative contributions to equity in AI, digital wellness, and visual culture.

© 2025 Dr. Stacey Denise | The Neuroaesthetic MD & SDM Medical PLLC. All rights reserved.

For citation:

Moore, S.D., Akassi, M. (2025). “Nefertiti AI: Claiming Sovereignty in the Age of Algorithmic Distortion.” Published July 29, 2025. The Neuroaesthetic MD | SDM Medical PLLC. https://orcid.org/0009-0007-6127-4194